As the generative AI boom accelerates across Southeast Asia, enterprises and government agencies are racing to secure the computational power needed to train and deploy Large Language Models. For most IT leaders, the default reflex is to turn to global public cloud giants (the "hyperscalers").

However, when it comes to the extreme demands of AI infrastructure, the conventional cloud playbook falls short. For intensive machine learning workloads, specialized local AI compute providers are proving to have unparalleled advantages over global hyperscalers in terms of bare-metal customization, latency, data sovereignty, and specialized technical support.

The Virtualization Tax and the GPU Shortage

Global hyperscalers are built to serve millions of diverse customers running traditional web and enterprise applications. To do this efficiently, they rely heavily on hardware virtualization. However, for high-performance AI training, this introduces a "hypervisor tax"—a performance degradation that occurs when an extra layer of software manages the hardware.

Specialized AI cloud providers eliminate this bottleneck by offering bare-metal GPU clusters. By providing direct, unmediated access to top-tier hardware (such as NVIDIA H100s or A800s connected via non-blocking InfiniBand networks), developers can utilize 100% of the computing power and memory bandwidth.

Furthermore, global hyperscalers are currently facing well-documented, systemic GPU shortages. Securing a large quota of high-end GPUs on public clouds often requires navigating long waitlists or locking into rigid, multi-year enterprise contracts. Localized AI clouds, built exclusively for high-density computing, offer far greater elasticity and dedicated resource allocation without the bureaucratic red tape.

Navigating Data Sovereignty in Southeast Asia

Southeast Asia is not a single, homogeneous regulatory block. Countries across the region are rapidly enforcing strict data localization laws, such as Thailand's Personal Data Protection Act (PDPA) and Indonesia's Personal Data Protection (PDP) Law.

Global cloud providers often route data through centralized regional hubs (frequently located in Singapore) to balance their network loads. For governments dealing with civic records, or hospitals processing medical imaging data, sending sensitive information across national borders constitutes a severe compliance risk.

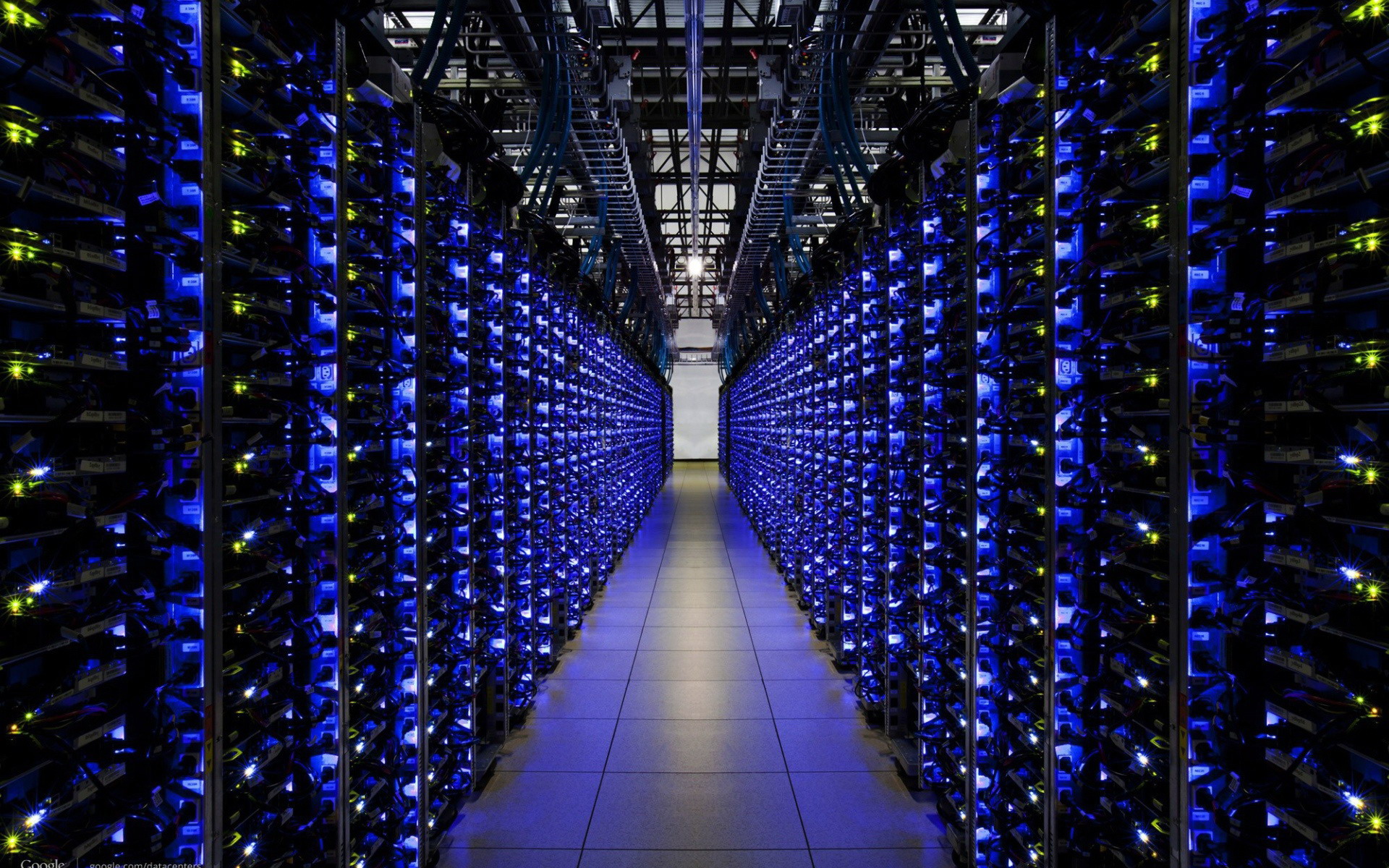

Local AI infrastructure providers solve this by guaranteeing 100% data residency. By utilizing in-country Tier-IV data centers, they ensure that all proprietary models and datasets remain strictly within national borders, completely shielding organizations from cross-border regulatory liabilities.

The Value of On-the-Ground, Expert O&M

Managing a massive GPU cluster is fundamentally different from managing standard cloud servers. Issues like InfiniBand network congestion, thermal throttling in high-density racks, and CUDA driver conflicts require highly specialized knowledge.

When a hardware or network bottleneck occurs on a global public cloud, enterprises are often relegated to generic support ticketing systems, waiting days for escalation. In contrast, local AI compute providers offer specialized Operations and Maintenance (O&M) teams physically located in the region. This 24/7 on-the-ground expertise means that complex infrastructure bottlenecks can be diagnosed and resolved in hours, ensuring maximum uptime for mission-critical AI workloads.

The Strategic Path Forward

Building the next generation of smart cities and enterprise AI in Southeast Asia requires more than just renting servers; it requires a tailored, high-performance computing foundation.

While global hyperscalers excel at general-purpose computing, the unique demands of LLM training and AI inference require a different approach. For organizations looking to maximize their AI ROI, prioritizing localized, bare-metal AI infrastructure providers is the most strategic step toward achieving superior performance, absolute compliance, and operational agility.